Inside the April 1 Drift Protocol Hack: Reconstruction, North Korea Links, and a DeFi Security Playbook

Summary

Executive summary

On April 1, the Drift Protocol suffered a roughly $285 million exploit that instantly became one of the highest-profile DeFi incidents of the year. What made it different was not only the dollar figure but the operational sophistication behind the breach: months-long infiltration, impersonation of traders, social engineering, and reportedly in-person contact used to extract credentials or influence staff decisions. In this post-mortem I reconstruct the attack chain from public reporting, assess where DeFi operational security failed, evaluate the evidence tying the attackers to North Korean-linked groups, map contagion risks for Solana and other ecosystems, and finish with a prioritized checklist for defenders.

Quick timeline of infiltration and compromise

Reported open-source reporting and investigative pieces converge on a similar timeline: attackers began interacting with Drift employees and community members months before the exploit, cultivating trust under the guise of legitimate traders and contributors. According to a deep-dive timeline, adversaries spent roughly six months inside Drift’s environment, engaging as regular counterparties and slowly escalating access and influence before the April 1 strike (Decrypt deep-dive).

Across that window attackers reportedly: (1) built rapport with staff and traders, (2) executed targeted social engineering (including via on-chain identities and off-chain messaging), and (3) used those relationships to influence operational processes that ultimately allowed privileged actions. After the compromise, on-chain movement and rapid liquidations triggered market reactions that hit SOL price and raised alarm among validators across ecosystems.

Reconstructing the attack chain (step-by-step)

Stage 1 — Long-game reconnaissance and persona-building

The adversary did not arrive as a faceless bot. Instead, they created plausible trader personas and engaged in normal market activity to blend into Drift’s community. This social reconnaissance included identifying key employees, community moderators, counterparties, and any operational handoffs where human approval could be exploited.

This pattern — long-term infiltration ahead of a financial strike — is consistent with advanced persistent threat (APT) playbooks where patience increases the probability of breaching high-value controls.

Stage 2 — Social engineering and operational manipulation

With rapport established, the attackers moved to social engineering: tailored messages, plausible requests, and the exploitation of informal operational channels. Public reporting describes scenarios where attackers posed as traders or service providers to obtain sensitive details or influence staff into making risky configuration changes (Decrypt deep-dive).

Notably, investigators flagged in-person contact as part of the playbook — an escalation that greatly increases credibility and lowers skepticism in human targets. This mixing of online and physical social engineering is rare in DeFi incidents but dramatically increases effectiveness when attackers have time to prepare.

Stage 3 — Privilege escalation and foothold consolidation

Once social channels yielded initial credentials or influence, attackers escalated privileges through a combination of credential reuse, misconfigured access controls, and supply-chain-like interactions (e.g., trusted tooling or deployment pipelines). The campaign targeting Drift reportedly exploited operational gaps rather than a single exotic smart-contract bug — a pattern experts described as avoidable with stronger OS security (Experts criticized Drift’s failures).

Stage 4 — Execution: on-chain exploit and rapid exfiltration

With control of key execution paths, attackers triggered the exploit to drain funds. Public accounts and transaction traces show rapid movement of DRIFT and other assets through mixing services and cross-chain bridges, obfuscating the final destination. The sheer speed of value extraction suggests pre-scripted cash-out routines executed immediately after gaining control.

Technical specifics of the exact code path exploited remain under analysis, but multiple reports emphasize that much of the attack hinged on operational access rather than an unknown zero-day in Solana itself.

Technical vector: what we know and what we don’t

Public reporting to date focuses on the human and operational vectors rather than a single buggy function name published in a CVE. The most credible accounts frame the incident as an operational compromise — attackers manipulated human workflows and leveraged privileged operational keys or tooling to trigger on-chain state transitions.

This distinction matters: a purely smart-contract vulnerability would be a technical patch and audit problem. An operational compromise is a process and people problem — one that persists until organizations fix human and tooling workflows.

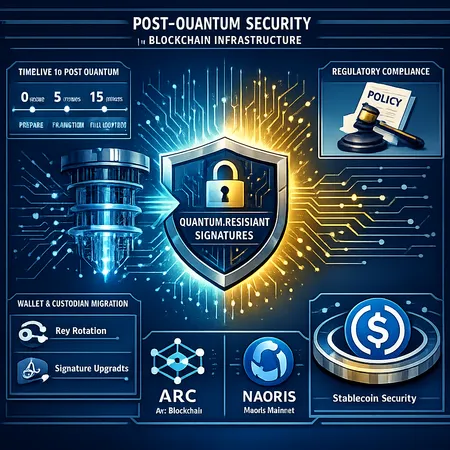

Where technical detail exists, it centers on how administrative or upgrade mechanisms were used to authorize transfers or reconfigure risk parameters. Because Solana’s runtime and off-chain orchestration is architecturally different from EVM chains, operational keys and validators play a significant role; compromise of those controls can have immediate, chain-level financial consequences.

How social engineering and in-person tactics worked here

The Drift case demonstrates a hybrid approach: online persona-building to gain trust, then leveraging that trust to request high-friction actions that would normally be resisted. In-person contact compounds trust: shaking hands, attending events, or meeting in cafés converts a digital alias into a verifiable human, which lowers the guard of otherwise cautious staff.

Social engineering vectors reportedly included:

- Tailored direct messages on community channels and private chats.

- Posing as counterparties with plausible trading histories.

- Requests that mirrored legitimate operational needs (e.g., testing a release, validating an oracle input).

- Establishing off-chain escrow or KYC-like interactions to appear legitimate.

This combination is a sobering reminder: even rigorously audited code is vulnerable when people and processes are weak.

Evidence linking the exploit to North Korean actors

Multiple investigative pieces have linked the operation to DPRK-affiliated groups, citing transaction patterns, reuse of infrastructure tied to previous North Korean operations, and intelligence from blockchain forensics. An initial report quantified the exploit and tied the operation to North Korea-linked actors based on overlaps in tooling and laundering techniques (Cryptopolitan initial report).

Decrypt’s reporting adds behavioral context: the attackers lived inside the Drift community for months, employing the kind of patient infiltration and deception that state actors or state-backed groups have historically favored for high-value operations (Decrypt deep-dive).

While on-chain evidence rarely yields 100% attribution, the mix of laundering patterns, linked infrastructure, and operational tradecraft makes attribution to North Korean-linked actors persuasive to many analysts. That attribution matters for legal and geopolitical responses; state-backed groups change the calculus for sanctions, law enforcement prioritization, and cross-border cooperation.

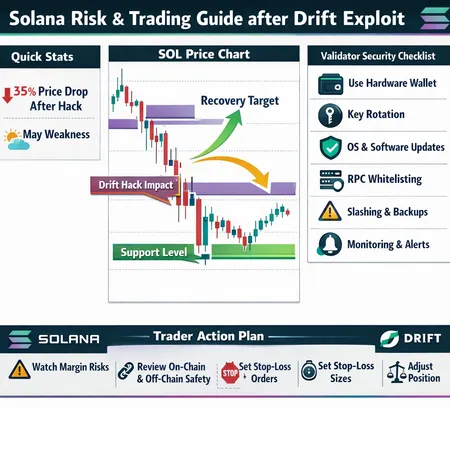

Immediate market impact on SOL and contagion signals

The exploit’s market ripple was immediate. SOL price experienced downward pressure as traders priced in liquidation risk, cross-margin failures, and potential on-chain liquidity shocks; short-term volatility spiked after the exploit surfaced and funds began to move (market reaction and SOL price impact).

More important than the short-term price dip is the change in risk perception. Liquidity providers and margin traders that rely on confidence in protocol ops pulled back. Validators and custodians raised alerts and hardened acceptance criteria for off-chain signatures or new integrations. Some validators publicly warned about social engineering spillover — a message that resonates beyond Solana to other chains and custodial services (validator warning).

For many market participants, the Drift breach recalibrated the cost of counterparty risk on Solana-native products, especially derivatives. For broader ecosystems that host cross-chain bridges and integrations, the incident underscored how operational failures in one protocol can cascade through liquidity and trust channels.

For traders and risk teams monitoring macro signals, it’s worth noting that memecoins and NFT markets can be second-order victims: when liquidity tightens, speculative assets are among the first to slide.

Legal, regulatory and governance fallout: civil negligence arguments

Experts were quick to frame parts of the incident as avoidable and a failure of basic DeFi operational security. Commentary after the hack accused Drift of shortcomings in human controls and process hygiene — the kinds of lapses that can be characterized as civil negligence in a litigatory or regulatory setting (experts’ criticism).

Possible regulatory responses and legal vectors include:

- Civil suits by affected counterparties claiming negligence in operational security and duty of care.

- Increased scrutiny from financial regulators over custody, operational resilience, and third-party vendor controls for major DeFi protocols.

- Faster calls for mandatory incident disclosure timelines and forensics standards in jurisdictions that want tighter oversight of crypto market stability.

If attribution to state-backed actors is accepted, additional outcomes could include sanctions, targeted law-enforcement collaboration, or diplomatic pressure to curb laundering channels — all of which complicate recovery and restitution pathways.

Contagion risks for Solana and other chains

There are three practical contagion channels to map:

- Counterparty and liquidity withdrawal: LPs and market-makers may reduce exposure on Solana derivatives, widening spreads and impairing hedging efficiency.

- Validator and infrastructure ripple effects: Validators exposed to similar social engineering vectors may tighten governance, delay upgrades, or change signer policies, which can temporarily degrade liveness or throughput.

- Cross-chain bridges and laundering networks: Funds laundered through bridges can propagate risk to other ecosystems, forcing DeFi teams on those chains to react and potentially freeze assets.

Social engineering specifically is a cross-chain threat: validators, custodians, or community managers in other ecosystems can be targeted with the same persona-based campaigns. A recent validator alert emphasized how social engineering attacks can spill over and threaten users across different ledgers (validator alarm).

These contagion vectors mean that Solana security incidents rarely remain local; they affect the broader crypto market’s trust, with second-order effects on NFTs, memecoins, and cross-chain DeFi integrations.

Prioritized checklist: hardening against multi-month intrusion campaigns

Below is a practical, prioritized checklist for DeFi security and risk teams. It assumes attackers may employ patient, multimodal campaigns combining online personas, in-person contact, and tooling abuse.

Governance and human controls

- Rotate and compartmentalize keys: ensure upgrades, admin actions, and treasury movements require multi-party approval with geographically-separated signers.

- Strict onboarding hygiene: verify identities for anyone claiming trading or integration roles; treat in-person meetings as information that increases risk rather than a trust shortcut.

- Least-privilege access: enforce role-based access for internal tooling and limit who can push changes to production.

- Incident playbooks and escalation: predefine who signs off on critical operational actions and require recorded approvals.

Communications and social engineering defenses

- Phishing-resistant MFA: prefer hardware security keys for all opsec-sensitive roles.

- Channel hardening: avoid critical approvals over casual chat. Use authenticated, auditable ticketing systems for approvals.

- Red-team social engineering: simulate months-long persona attacks to measure human resilience.

- In-person contact policy: require security vetting for any in-person engagements that may involve exchange of materials or privileged requests.

Tooling, supply chain and CI/CD

- Secure CI/CD: require signed artifacts and reproducible builds. Gate deployments behind cryptographic checks and multi-signer approvals.

- Vendor controls: vet third-party services and require contractual security SLAs.

- Runtime monitoring: instrument tooling to detect anomalous admin actions or sudden privilege escalations.

Forensics, monitoring and post-incident response

- Immutable logging: centralize and preserve audit logs off-site for forensic use.

- Transaction escape-hatch: design irreversible operations with human-in-the-loop checks where feasible (e.g., time delays for large transfers).

- Post-incident communication plan: transparent and timely updates reduce misinformation and market panic.

Strategic and legal measures

- Insurance and runway: maintain insurance where possible and treasury runway to absorb shocks.

- Law-enforcement liaison: have pre-established contacts for cross-border investigation cooperation.

- Sanctions hygiene: monitor counterparties and mixing services for links to sanctioned actors; block or freeze at-risk pathways quickly.

This checklist is intentionally operational — these are the controls that mitigate multi-month APT-style threats, not just quick audit fixes.

How to prioritize remediation in the first 30/90/180 days

- First 30 days: stabilize operations — rotate critical keys, freeze risky integrations, and publish an incident timeline to stakeholders. Start forensic preservation and communication.

- First 90 days: rebuild trust — implement immediate governance fixes (multi-sig, hardware keys), conduct social-engineering red-team exercises, and complete a formal root-cause analysis with independent firms.

- First 180 days: institutionalize change — bake new controls into developer workflows, update onboarding and event response playbooks, and pursue insurance/regulatory alignment.

Longer-term, teams should assume adversaries iterate: what stops them today may be evaded tomorrow. Continuous improvement is essential.

Conclusion: the hard truth and an operational mandate

The Drift Protocol exploit is a cautionary tale that modern DeFi risk is often human-first. Code audits remain necessary; they are not sufficient when human trust and process controls are exploited over months. For security-focused operators and risk teams, the practical takeaway is clear: treat social engineering and in-person contact as first-class threats, enforce stringent operational separations, and assume sophisticated actors — including state-backed groups — will patiently probe any weakness.

For teams integrating across ecosystems, including those building on Solana or monitoring collateral movements, this incident should trigger immediate hardening and cross-team rehearsals. For market participants worried about contagion, monitor validator notices and counterparty exposure closely; friction in one protocol often metastasizes.

Bitlet.app and other service providers should view this as a reminder that platform-level risk assessments and client education are essential parts of the DeFi safety stack.

Sources

- Initial report tying the exploit to North Korea-linked actors: https://www.cryptopolitan.com/drift-protocol-hack-north-korea-ai/

- Deep-dive reporting on six-month infiltration and persona-based tactics: https://decrypt.co/363364/north-korean-hackers-spent-six-months-infiltrating-drift-before-285m-exploit

- Experts criticizing Drift’s operational failures: https://www.cryptopolitan.com/experts-slam-drift-after-280m-hack/

- Market reaction and SOL price impact coverage: https://cryptonews.com/news/solana-price-prediction-285m-hack/

- Validator warning about social engineering spillover risks: https://u.today/xrpl-validator-sounds-alarm-to-xrp-users-on-social-engineering-threat?utm_source=snapi

For continued updates and practical guides on protocol security, follow industry threads and operator advisories — and remember to audit processes as aggressively as you audit code. For context on how broader market narratives may shift after breaches like this, many teams keep a running watch on topics such as DeFi and Solana.