Inside the $280M Drift Protocol Hack: Forensic Timeline, Attribution, and a Hardening Playbook

Summary

Executive summary

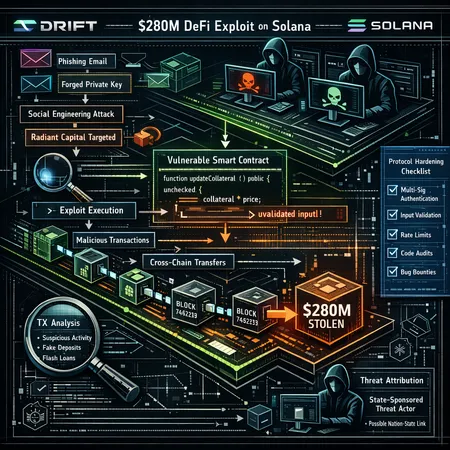

The Drift Protocol exploit that drained roughly $280 million on Solana was not a single‑hour opportunistic hack. Public reporting and forensic updates show a months‑long campaign of reconnaissance, account infiltration and staged access escalation. In this post I reconstruct the chain of events from available investigations, evaluate the balance between social engineering and code vulnerabilities, explore the links to actors previously tied to Radiant Capital, and deliver a prioritized checklist for hardening margin and perpetual protocols on high‑throughput chains like Solana.

This piece is written for DeFi engineers and security teams who maintain complex margin and derivatives systems and need a tactical, risk‑ranked plan to reduce catastrophic exposures.

Reconstructing the attack: a timeline of preparation and exploitation

Publicly available reporting paints a picture of patient preparation rather than a quick strike. Independent timelines suggest the adversary spent months on reconnaissance and infiltration before triggering the final withdrawal sequence.

Months of reconnaissance and foothold (as assembled from reporting)

- Investigators reconstructed a long planning phase in which attackers probed the target, likely mapping administrative workflows, off‑chain tools, and custodial touchpoints. Early reporting describes the operation as a months‑long infiltration rather than an impulsive exploit (Aped.ai report).

- Blockonomi and other outlets highlight techniques consistent with advanced persistent threat (APT) behavior: lateral movement, staged account compromises, and careful timing to avoid early detection (Blockonomi timeline and analysis).

Credentialing, social paths, and the pivot

- Preliminary probe notes suggest the actors leveraged access paths and knowledge similar to those used in the Radiant Capital incidents. Cointelegraph’s reporting links early investigative signals to actors connected with that earlier activity, implying reuse of tradecraft and infrastructure (Cointelegraph preliminary findings).

- The campaign’s core success vector appears to have been operational access — control over keys, admin interfaces, or signed transactions — gained through a mix of social engineering and compromise of off‑chain systems rather than an exploit of Drift’s on‑chain logic alone.

Execution and extraction

- Once privileged paths were secured, the intruders executed a withdrawal sequence and cross‑chain extraction to obfuscate funds flow. The speed and scale of draining $280M indicate prior testing and dry‑runs to ensure the exploit would succeed without tripping anti‑fraud controls.

Taken together, the timeline supports a hypothesis: months of human‑level intelligence gathering, then targeted misuse of privileged operational access to subvert otherwise secure on‑chain code.

Attack chain: step‑by‑step reconstruction

1) Reconnaissance

The actors enumerated admin roles, build & deploy pipelines, and third‑party integrations. Public reporting documents targeted work on accounts and keys across custody and RPC layers.

2) Initial foothold

Footholds were established in off‑chain infrastructure — perhaps by phishing, compromised third‑party vendors, or stolen credentials — enabling persistent access to tooling used by the protocol team.

3) Privilege escalation and lateral movement

With foothold access, attackers escalated privileges and moved laterally into more sensitive systems. This is classic APT behavior: patience, blending in, and avoiding noisy actions that would alert defenders.

4) Staging and rehearsals

Actors staged transaction templates and tested extraction pathways, likely using low‑value transfers and dormant wallets to validate the plan.

5) Trigger and exfiltration

Once confident, the group executed the withdrawal routines and funneled assets through cross‑chain bridges and mixers to launder proceeds.

This chain underscores that many high‑severity breaches in DeFi are operational failures — compromised human or vendor trust — rather than purely code‑level bugs.

Social engineering vs. code vulnerabilities: which was decisive?

The Drift case tilts heavily toward social engineering and operational compromise as the decisive enabler. Key observations:

- The absence of a simple, widely‑publicized on‑chain exploit pattern suggests the on‑chain programs did not contain a single catastrophic bug that trivially allowed drains. Instead, attackers abused legitimate admin paths.

- Early public reconstructions emphasize credential compromise and infiltration consistent with the playbooks used in prior Radiant Capital incidents, which also relied on operational access.

That said, code and architecture still matter: if a protocol grants broad unilateral power to an admin key with no timelock, circuit breaker, or multisig guardrails, operational compromise becomes inevitable systemic risk. In other words, social engineering allows the keys, but poor key governance and upgradeability design let those keys wreck the protocol.

Attribution and the state‑backed threat model

Reports discussed links between the Drift attackers and actors associated with Radiant Capital, and some outlets suggest state‑sponsored involvement. Blockonomi’s analysis characterizes the operation consistent with nation‑level resource commitment and long timelines, and Cointelegraph reports preliminary findings connecting the probe to actors behind Radiant.

Attribution in crypto is inherently probabilistic. Still, the combination of:

- long dwell time,

- sophisticated OPSEC and cash‑out routes, and

- reuse of tactics and infrastructure observed in earlier incidents tied to Radiant Capital,

makes attribution to a well‑resourced group plausible. If state or state‑sponsored actors are involved, defenders must assume greater persistence, deeper supply‑chain compromises, and targeted human‑intelligence operations.

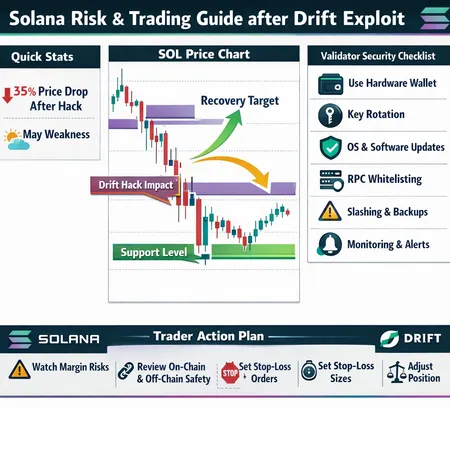

What this reveals about Solana ecosystem posture

Solana’s high throughput and program model make it attractive for derivatives and perp protocols — but that same environment increases operational complexity:

- Many teams run fast CI/CD and frequent program upgrades; rapid upgradeability without strict governance increases risk when admin keys are compromised.

- Heavy reliance on off‑chain builders, centralized RPC nodes, and vendor services expands the perimeter where social engineering can succeed.

- Cross‑program interactions and composability amplify blast radius: a single privileged actor can influence several integrated systems.

The lesson: performance is valuable, but operational discipline must match speed. Protocols on Solana should treat off‑chain tooling and personnel as first‑class attack surfaces.

You can see similar ecosystem tradeoffs discussed in other post‑mortems; for teams building on DeFi rails this is an urgent reminder.

Regulatory, insurance, and market impacts

The scale and likely sophistication of this incident will have ripple effects:

- Regulators will frame state‑backed or high‑impact exploits as systemic risks — expect more scrutiny on custody, admin governance, and vendor due diligence.

- Insurance carriers will tighten underwriting, raise premiums, and demand stronger technical proofs of key management, timelocks, and multisig for coverage.

- Cross‑chain bridges and custody providers may adopt stricter KYC/AML checks and slashing or clawback clauses for dubious flows, complicating recovery efforts.

Protocols should assume that post‑incident liability conversations will center on whether adequate operational controls were in place — not just whether the code was audited.

Bitlet.app and other platforms offering custody and installment services will likely see heightened demand for transparent attestations of operational security.

A prioritized hardening checklist for margin and perp protocols

Below is a practical, ranked checklist DeFi engineers can use. Items near the top are highest impact and typically achievable within weeks.

- Enforce multisig & quorum for any admin actions

- Require n‑of‑m multisig with geographically and organizationally diverse signers for upgrades, parameter changes, and emergency withdrawals.

- Introduce timelocks and human‑readable proposals

- Every admin upgrade or critical parameter change must be subject to a meaningful timelock (e.g., 24–72 hours) and public proposal flow so off‑chain observers can react.

- Immutable or narrowly scoped admin roles

- Minimize privileged functions; prefer upgrade patterns that limit the scope of admin changes. Make truly dangerous powers immutable when possible.

- Pause, circuit breakers, and on‑chain emergency modes

- Implement pausable markets and global circuit breakers that can be triggered by a verified committee. Ensure the pause itself cannot be used to steal funds.

- Defense in depth for key management

- Use hardware security modules (HSMs), multi‑party computation (MPC), or custodial threshold signing with strict SLAs. Rotate keys periodically and record provenance.

- Strong CI/CD and deployment controls

- Require signed commits, reproducible builds, and deploy gating. Keep deployment keys offline; use ephemeral signing workflows that resist credential theft.

- Oracle and price‑feed resilience

- Add feeder diversity, TWAPs, deviation checks, and fallback pricing. For derivatives, sanity checks on funding rate and liquidation engines prevent manipulation during extraction.

- Limit cross‑program blast radius

- Use capability‑based access to limit what other programs can do to your accounts. Isolate per‑market accounting where feasible.

- Vendor and third‑party risk management

- Vet custodians, relayers, and oracles with pen tests, code attestations, and contractual incident response clauses.

- Monitoring, anomaly detection, and rehearsed runbooks

- Build behavioral alarms for unusual admin activity, unexpected signed transactions, and off‑chain logins. Rehearse war‑games and incident playbooks quarterly.

- Financial hedging and insurance planning

- Maintain robust insurance funds, capped per‑market exposures, and pre‑negotiated insurer response processes to speed any recovery.

- Social engineering and red‑team training

- Regular phishing simulations and staff training to reduce the probability of credential compromise.

Cross‑chain and derivatives‑specific mitigations

For margins, perps and cross‑chain integrations, add:

- Market isolation: ensure individual markets cannot bankrupt the entire protocol — isolate margining and settlement per market.

- Controlled bridge windows: limit per‑bridge transfer amounts, require time‑locked withdrawals for large cross‑chain moves, and incorporate manual review thresholds.

- Liquidation safety rails: multi‑oracle triggers for mass liquidations, capped per‑block liquidation sizes, and staggered execution to avoid oracle manipulation.

- Funding and index sanity checks: validate funding payments against expected ranges and suspend funding if anomalies are detected.

These controls reduce the payoff for attackers who gain limited operational access by making large thefts slower, more detectable, and harder to extract.

Practical next steps for teams (30/60/90 day plan)

- 0–30 days: enforce multisig on all admin keys, add timelocks, and enable on‑chain pause buttons. Run a table‑top incident simulation.

- 30–60 days: harden CI/CD, rotate keys to HSM/MPC solutions, and publish a public upgrade process with clear timelines.

- 60–90 days: implement oracle redundancy, market isolation, and negotiate improved insurance terms tied to implemented controls.

Closing thoughts

The Drift incident is a reminder: high‑value DeFi protocols are attractive targets not only for opportunists but for persistent, well‑resourced actors. The breach demonstrates how social engineering and operational compromise can defeat even audited smart contracts if governance and key‑management practices lag behind.

For DeFi security teams, the answer is not just more audits — it is operational hardening: stringent multisig, timelocks, vendor due diligence, and the assumption that adversaries will try to manipulate humans and suppliers first. Implementing the prioritized checklist above will reduce the likelihood that months of reconnaissance end in a multi‑hundred‑million dollar loss.

Sources

- https://aped.ai/news/drift-protocol-exploit-took-months-to-prepare?utm_source=snapi

- https://cointelegraph.com/news/drift-protocol-exploit-preparation-preliminary-findings?utm_source=rss_feed&utm_medium=rss&utm_campaign=rss_partner_inbound

- https://blockonomi.com/drift-protocol-hack-how-a-north-korean-group-spent-six-months-infiltrating-a-defi-protocol/