What Bittensor’s Subnet 3 and a 72B Model Mean for Decentralized AI Compute

Summary

Executive snapshot

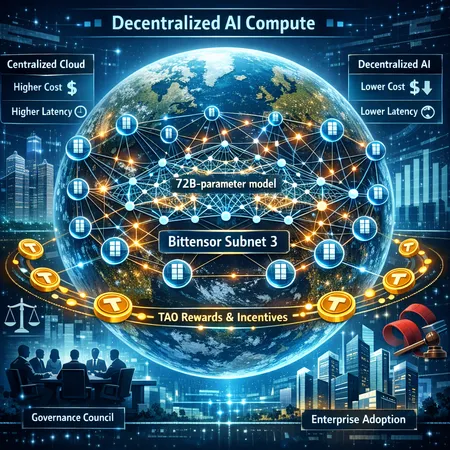

Bittensor’s Subnet 3 recently trained a 72‑billion‑parameter model across more than 70 nodes worldwide, a practical milestone that converts theory into engineering reality. The experiment shows decentralized training can reach large model scales, but it also clarifies where decentralized stacks must improve to compete with centralized labs on cost, latency, reproducibility, and governance. For product leads and crypto‑native AI investors weighing decentralized compute as a competitive layer, the tradeoffs are now empirical rather than speculative.

What Subnet 3 achieved — the headline and why it matters

Subnet 3’s run is meaningful for three reasons: scale, distribution, and economic coordination. Training a 72B‑parameter model crosses the territory occupied by many contemporary foundation models; doing so across 70+ nodes demonstrates that geographically distributed compute can be orchestrated to solve a large, single training task. Practically, this validates two core ideas: (1) that peer‑to‑peer contributions can assemble effective multi‑GPU clusters without a single central operator, and (2) tokenized incentives can bootstrap compute supply and participation. Coverage of the experiment shows decentralized compute moving from proof‑of‑concept to real workload viability (Blockonomi’s breakdown).

Technical overview: how Subnet 3 coordinates distributed training

At a high level, Subnet 3 layers an on‑chain coordination plane atop off‑chain compute and communication. Nodes run the model shards and exchange gradients or parameter updates by using a hybrid of distributed training techniques adapted to the network environment: chunked parameter synchronization, gradient compression, and asynchronous update protocols to tolerate heterogeneity and intermittent connectivity. On‑chain components record reputation, handle staking and rewards in TAO, and serve as the canonical registry for model versions and contributor metadata.

Key engineering patterns in Subnet 3:

- Partitioned model hosting: large models are sharded across nodes specializing in available VRAM and compute class. This resembles model‑parallel approaches used in centralized setups but explicitly accounts for variable node capabilities.

- Asynchronous and stale‑tolerant updates: to reduce waiting on straggler nodes, Subnet 3 uses asynchrony and update staleness bounds rather than strict synchronous all‑reduce on every step.

- Incentive feedback loop: on‑chain scoring metrics (quality of contributed logits/gradients, uptime, and peer evaluations) convert into TAO rewards and bandwidth priority.

The result is a system that accepts the tradeoff: you get scale and decentralization, while accepting higher communication overhead and more complex failure modes than a homogeneous data center cluster.

Distributed training results and the efficiency story

Subnet 3’s experiment reports successful convergence to a usable model, which is the technical headline. But raw wall‑clock cost and training efficiency are not yet matching top hyperscalers. Why? Distributed training efficiency is dominated by three variables: interconnect bandwidth/latency, batch parallelism efficiency, and utilization. In a decentralized mesh, interconnects are Internet links with variable latency; that induces more gradient staleness or forces smaller step sizes. Nodes also vary in GPU generation and availability, creating fragmentation in utilization.

In practice, decentralized training will often be 1.2x–3x less resource‑efficient than a well‑tuned centralized cluster for the same model, depending on network topology and aggregation techniques. That said, the marginal cost can still be attractive if decentralized nodes are compensated below hyperscaler rates, or if the model owner values censorship‑resistance, geographic distribution, or tokenized governance.

How decentralized stacks compare to centralized labs

Centralized labs (hyperscalers, large AI startups) still hold advantages in raw throughput, predictable SLAs, curated datasets, and optimized system stacks (high‑bandwidth interconnects, NVLink, custom scheduling). They win on training time and repeatability. But decentralized approaches score differently:

- Costs: centralized providers capture economy of scale and custom hardware—cheaper per effective FLOP when utilization is high. Decentralized networks can offer cost arbitrage using idle GPUs, mining rigs repurposed for compute, or regionally cheaper energy sources, but need strong orchestration to close the efficiency gap.

- Latency and convergence: centralized infra reduces synchronization overhead and supports large effective batch sizes. Decentralized training accepts higher variance and may require algorithmic adaptations (staleness‑aware optimizers, gradient sparsification).

- Governance and trust: centralized AI labs control model weights, data pipelines, and update cadence. In contrast, subnet governance (on‑chain proposals, staking, and reputation) can decentralize decision rights, enable community audits, and distribute economic upside — at the cost of more complex coordination.

Where decentralized networks can pull ahead is in composability (open model registries, token‑mediated monetization), resistance to single‑party policy enforcement, and new incentive architectures that reward small, consistent contributions rather than centralized capital.

TAO token: utility, incentives, and economic design

TAO turns abstract compute participation into an economic primitive. In Subnet 3’s world, token mechanics serve several functions:

- Rewarding compute and quality: TAO is paid to nodes based on verified contribution metrics (model improvement, uptime, response quality). This monetizes otherwise idle GPU time and aligns node behavior to model quality.

- Access and priority: TAO can function as a stake or utility credit that grants priority access to faster aggregation, data slices, or inference serving capacity.

- Governance and dispute resolution: token holders can vote on protocol upgrades, curate training datasets, and set penalty rules for malicious activity.

Design tensions to watch:

- Short‑term reward capture vs. long‑term model health: naive payout schemes incentivize brute‑force contribution rather than high‑quality gradients or careful dataset curation. Mechanisms like delayed rewards, slashing for poisoning, and reputation‑based multipliers help steer behavior.

- Sybil and Byzantine resilience: staking mitigates fake nodes, but economic parameters must be tuned so attackers can’t cheaply buy influence and corrupt training with poisoned updates.

- Liquidity and price impact: as TAO becomes a payment rail for compute, demand shocks (e.g., a popular enterprise subnet) could introduce token price volatility that complicates predictable budgeting for long training runs.

Overall, TAO becomes not only the accounting unit for compute but also the governance lever that turns a loose mesh of providers into a coordinated training market.

Practical enterprise use cases for decentralized training

Enterprises won’t immediately migrate away from centralized providers for mission‑critical foundational model training, but several near‑term and medium‑term use cases are realistic:

- Hybrid private subnets: enterprises run a permissioned subnet to keep sensitive data within consortium nodes while leveraging public nodes for non‑sensitive compute peaks. This preserves data governance and reduces cost for burst capacity.

- Edge and domain‑specific models: decentralized training excels where data is geographically dispersed (IoT sensors, telecom logs) and sharing raw data is impractical. Federated or subnet‑based fine‑tuning lets models learn from dispersed sources while preserving privacy.

- Cost arbitrage and geographic resiliency: companies with intermittent large training jobs can tap decentralized capacity in regions with cheaper energy or surplus GPU time, especially if the protocol supports verifiable compute and legal SLAs.

- Model marketplaces and pay‑per‑use inference: companies can monetize bespoke models via tokenized access, paying TAO for inference quotas or fine‑tune runs.

Adoption will depend on integrations (APIs, SDKs), legal/policy assurances, and the ability to certify node behavior for compliance and audit trails. Bitlet.app and similar platforms that bridge crypto payments and simple onboarding could play a role in simplifying commercial flows for enterprises experimenting with tokenized compute.

Regulatory, operational, and security hurdles

Decentralized training introduces novel regulatory and operational complexity that enterprises and regulators will scrutinize:

- Data sovereignty and privacy: cross‑border model training raises jurisdictional questions about who controls and is responsible for data used in updates. Enterprises need mechanisms for data minimization, audit logs, and potentially zero‑knowledge verification of training steps.

- Export controls and dual‑use: large models can be subject to export restrictions and national security rules. A global mesh complicates compliance because model weights may traverse multiple legal domains.

- Energy and environmental scrutiny: the energy footprint of large‑scale training is real. Partnerships between mining/energy players and AI (including proposals to use nuclear or otherwise low‑carbon energy) illustrate the industry grappling with supply and carbon intensity (CoinTribune on miners and energy). Decentralized networks must surface energy provenance and possibly price in carbon costs.

- Integrity and poisoning attacks: distributed contributors increase the attack surface for model‑poisoning, backdoors, or data leakage. Robust on‑chain penalties, verifiable compute proofs, and cross‑validation strategies are needed to manage this risk.

- SLAs and liability: enterprises require predictable performance and clear lines of legal liability if models behave badly. Contractual frameworks that link on‑chain reputation to off‑chain legal recourse need to be standardized.

Operationally, tooling gaps—reliable orchestration, reproducible checkpoints, model debugging across heterogeneous hardware, and verifiable audits—remain the most immediate friction points.

Looking forward: primitives that will matter

If decentralized training is to become a lasting competitive layer, several primitives need to mature:

- Verifiable compute: succinct proofs or attestation systems that let a model owner confirm a claimed compute step without full replay.

- Reputation and staking frameworks that reflect long‑term contribution quality rather than short windows of activity.

- Adaptive distributed optimizers tuned for high‑latency, heterogeneous networks (staleness‑aware algorithms, aggressive compression, redundancy‑aware aggregation).

- Hybrid architectures: private/public subnet models where enterprises can retain data control but source cryptoeconomic incentives and elasticity from public nodes.

These primitives will reduce the efficiency gap while preserving the decentralization and economic properties that make networks like Bittensor interesting.

Conclusion

Subnet 3’s 72B model run is a milestone: it pushes decentralized training out of theoretical discussion and into measurable engineering territory. For product leads and crypto‑native AI investors, the takeaway is pragmatic optimism. Decentralized AI is not yet a drop‑in replacement for hyperscale cloud training, but it is a viable, complementary layer that introduces new tradeoffs — tokenized incentives (TAO), governance, and censorship‑resistance on one side; higher communication overhead and operational complexity on the other. The next 12–24 months will be about maturation: stronger verification, better economic design, and integration with enterprise compliance and SLA expectations. If those pieces fall into place, decentralized training could become a durable, competitive layer alongside centralized providers.